Phil Crissman explains Propositions as Types with a dialogue between Achilles and the Tortoise, in the style of Douglas Hofstadter (who in turn was inspired by Lewis Carrol). Lambda Man makes an appearance.

This is the first part of a miniseries on this year’s Symposium on Principles of Programming Languages, a.k.a. POPL 2026, hosted by Jessica Foster.

In this episode, we talk about: undergrad funding and participation, the behind the scenes of AV, choreographic programming, quantum languages, conference catering, and the joy of theory. And at one point, you’ll even hear us get kicked out the venue mid interview. Enjoy!

One example is the observation that adjoint mode automatic differentiation isn't a separate algorithm to forward mode automatic differentiation but a composition of forward mode and transposition. I talked about this in an old paper of mine Two Tricks for the Price of One and it resurfaces more recently in Jax: You Only Linearize Once.

Another example is the REDUCE algorithm used to differentiate a class of stochastic process despite making hard decisions when sampling from a distribution. It turns out this algorithm is nothing but ordinary everyday importance sampling but generalized to probabilities lying in a non-standard algebraic structure. I describe it in a blog article here and you can find out more about extending beyond the non-negative reals in a paper by Abramsky and Brandenberger. You sort of don't have to lift a finger to implement REDUCE - it just happens "for free" when you switch algebraic structure.

Here's a teeny tiny example of another "for free" method that just appears when you switch types.

> module Main where

> import qualified Data.Map.Strict as Map

> import Control.Applicative

> import Control.Monad

> newtype V s a = V { unV :: [(a, s)] }

> deriving (Show)

> instance Functor (V s) where

> fmap f (V xs) = V [ (f a, s) | (a, s) <- xs ]

> instance Num s => Applicative (V s) where

> pure x = V [(x, 1)]

> mf <*> mx = do { f <- mf; x <- mx; return (f x) }

> instance Num s => Monad (V s) where

> (V xs) >>= f = V [ (b, s * t) |

> (a, s) <- xs, (b, t) <- unV (f a) ]

> instance Num s => Alternative (V s) where

> empty = V []

> (<|>) = add

> instance Num s => MonadPlus (V s) where

> mzero = empty

> mplus = (<|>)

> normalize :: (Ord a, Num s, Eq s) => V s a -> V s a

> normalize (V xs) = V $ Map.toList $

> Map.filter (/= 0) $ Map.fromListWith (+) xs

> scale :: Num s => s -> V s a -> V s a

> scale c (V xs) = V [ (a, c * s) | (a, s) <- xs ]

> add :: Num s => V s a -> V s a -> V s a

> add (V xs) (V ys) = V (xs ++ ys)

> insert' :: [(k, v)] -> k -> v -> [(k, v)] > insert' dict k v = (k, v) : dictIt's not very clever, but it does function as a dictionary builder. Let's write it in a more general way using the fact that the list functor is applicative:

> insert :: Alternative m => m (k, v) -> k -> v -> m (k, v) > insert dict k v = pure (k, v) <|> dictWe can go ahead and insert some entries:

> main :: IO () > main = do > let dict1 = empty :: [(Int, Int)] > let dict2 = insert dict1 0 1 > let dict3 = insert dict2 1 0 > print dict3

V instead of []:

> let dict4 = empty :: V Double (Int, Int) > let dict5 = insert dict4 0 1 > let dict6 = insert dict5 1 0 > print $ normalize dict6What has happened is that we've replaced the dictionary update with

D' = D + k⊗vAddition, tensor product, these things are more amenable to differentiation than appending to a list. When using the vector space monad (or applicative) the

(,) operator plays a role more like tensor product.

Note that we're also no longer limited to inserting basis elements, we can use any suitably typed vectors:

> let dict7 = dict6 <|> > (0.5 `scale` pure (0, 0) <|> 0.5 `scale` pure (1, 1)) > print $ normalize dict7This is the key ingredient in the update rule in DeltaNet, described in Parallelizing Linear Transformers with the Delta Rule over Sequence Length at the start of section 2.2.

I was previously interested to see how die rolls in an RPG appear when conditioned on you having survived an unlikely situation. As might have been predicted, if the die rolls contribute to that survival in a largely additive way, for example by being damage scored against a large opponent, then the posterior distribution of the rolls looks exponentially tilted.

But the only virtue of the brute force Monte Carlo method I used was that it was easy to code. It's computationally wasteful. So I wrote a much more performant DSL in Python which I have put on github.

It uses numpy to achieve tolerable numerical performance, but in addition it uses two techniques beyond brute force to make it usable.

One challenge with a probabilistic language is to manage state.

First there's state "in the past": If you're computing probabilities that are sums of many large intermediate states, for example 100 die rolls, you run the risk of running foul of combinatorial explosion.If you write a loop like:

for i in range(100):

t += d(6)

you want to be sure that the += operation erases history (ie. previous values of t) so you aren't tracking all 6^100 individual histories. In this case it's easy but in other cases it might not be so obvious that you have unneeded state lying around. So the code has a simple (and incomplete) backward liveness pass to insert deletions of data that won't be used again. Whenever state is deleted, you can merge histories that are now indistinguishable.

And then there is state "in the future": sometimes you'd like to compute probabilities of data structures like lists but materializing a list results in state that can cause combinatorial explosion. So I support Python style generators allowing you to generate data lazily - for example permutations of cards. So we can bring into existence state just before we need it.

There's another important technique I used: this code uses brute force (though at this point maybe I should stop calling it brute force) so there are many states, each corresponding to a possible set of values for some numpy objects. And we often want to perform a numpy operation for each of these values. We don't need to loop. In many cases a parameterised family of numpy operations is in fact a single numpy operation. This kind of transformation is ubiquitous in GPU computing. So we can interpret the following &&D fight, summing over the combinatorialy large number of ways it could happen, in a few seconds:

@d9.dist

def f():

# Brachiosaurus (Monster Manual 1e p. 24)

hp1 = lazy_sum(36 @ d(8))

# Tyrannosaurus Rex (Monster Manual 1e p.28)

hp2 = lazy_sum(18 @ d(8))

for i in range(14):

print("round", i)

if hp1 > 0 and d(20) > 1:

hp2 = max(0, hp2 - lazy_sum((x for x in 5 @ d(4))))

if hp2 > 0:

# Two claws...

if d(20) > 1:

hp1 -= d(6)

if d(20) > 1:

hp1 -= d(6)

# ...and a bite

if d(20) > 1:

hp1 -= lazy_sum((x for x in 5 @ d(8)))

hp1 = max(hp1, 0)

win1 = hp2 == 0

win2 = hp1 == 0

return win1, win2

Besides its own test suite I also used a large number of questions on the RPG Stack Exchange to build a library of examples for testing.

Of mathematical interest: most of the probability computations take place in a semiring. So most of the numerical computing is simply addition and multiplication. When that's all you're doing, there is the well known technique for working with large integers where you work modulo p[i] for some array of primes and only at the end reconstruct your final result using the Chinese remainder theorem. Less will known is that this works for rationals also. (I conjectured this was true, started deriving it myself, and then learnt there are published methods.) This means we can work with exact rational arithmetic using numpy without the need for a bignum library. This code turns out being related to provenance semirings as it effectively becomes a simple database tracking the provenance of each record.

I originally wrote this code to target GPUs. On my Mac, numpy turned out to be comparable in speed to PyTorch and way faster than TensorFlow. I think this is because those libraries are optimised around data of fairly fixed shape passing through fixed pipelines whereas my code is very ad hoc. I've a feeling a few custom kernels would speed it up a lot. (I may be wrong about this but I do know I can write CUDA/Metal code directly that is many times faster than some of my dice-nine examples.)

And one final note. This is a deeply embedded DSL. I use Python as a host to give me an AST that I interpret. This isn't simply overloading of Python operators.

Last year Ethan Heilman wrote about a simple game he calls Terminal Maneuvers. This game simulates a missile attacking an interstellar ship. The ship has a laser defence system. One player controls the missile, and the other player controls the laser. If the missile hits the ship, Missile wins. If the laser hits the missile, the missile is destroyed and Laser wins.

The complicating factor is that, due to the relative motion of the laser and the ship being a significant fraction of the speed of light, Laser has to aim not at the missile but where the missile will be. This distance allows the missile to perform erratic manoeuvers to prevent Laser from knowing what its future position will be. However, Missile must expend fuel to perform these manoeuvers.

The Terminal Maneuvers game proceeds in five rounds, giving the laser five opportunities to hit the missile. In each round, Missile secretly commits to an amount of fuel they will expend. Laser must “aim” by guessing the amount of fuel expended by Missile. If they guess correctly, there is some probability of destroying the missile, which depends on how far away the missile is and how much fuel the missile expended. The table below shows the probabilities of the missile being destroyed in the various rounds.

| Fuel Cost | Round 1 | Round 2 | Round 3 | Round 4 | Round 5 |

|---|---|---|---|---|---|

| 0 Fuel | 100% | 100% | 100% | 100% | 100% |

| 1 Fuel | 1/6 | 2/6 | 3/6 | 4/6 | 5/6 |

| 2 Fuel | 0% | 1/6 | 2/6 | 3/6 | 4/6 |

| 3 Fuel | 0% | 1/6 | 2/6 | 3/6 | |

| 4 Fuel | 0% | 1/6 | 2/6 | ||

| 5 Fuel | 0% | 1/6 | |||

| 6 Fuel | 0% |

The missile has a limited amount of fuel at the start of the game. Fuel spent earlier in the game means less fuel available later in the game when it is most needed. The amount of starting fuel selects the difficulty of the game. Ethan suggests starting with seven fuel, which empirically gives Missile about a 25% chance of winning.

Laser knows how much fuel the missile has at the start of each round, so it is imperative that Missile does not run out of fuel in the middle of the game. If Laser knows the missile is out of fuel, Laser will predict zero fuel used and will always successfully destroy the missile. That said, as long as Missile has some fuel, choosing to burn zero fuel is still a legitimate option.

Starting with seven fuel, one strategy for Missile would be to burn one fuel on the first four rounds and burn the remaining three fuel on the last round. However, if Laser realizes this is Missile’s strategy, Laser can always predict the correct amount of fuel that will be used by the missile. Taking the product of all the probabilities of Missile’s survival in each round, Missile only has a 4.6% chance of winning. Clearly, Missile’s optimal strategy should be non-deterministic.

I figured this game would be a fun exercise in learning about mixed-strategy (i.e., non-deterministic) Nash equilibrium. This game is a small finite game, so it is reasonably easy to analyze, but it is significantly more complicated than trivial games often used in Nash equilibrium examples.

For these calculations, it is best to find the Nash equilibrium strategy at the endgame and work backward from there. To that end, let us start with the simplest non-trivial endgame. Missile has survived to Round 5 and has 1 fuel left. Missile can choose to burn their last fuel or not, and Laser can choose to aim at no fuel burned or not. This yields the following, game-theoretic payoff matrix, listing the probabilities of missile or laser winning:

| Predict 0 | Predict 1 | |

|---|---|---|

| Burn 0 | 0, 1 | 1, 0 |

| Burn 1 | 1, 0 | 1⁄6, 5⁄6 |

This is a constant-sum game, because the total score of all players is always the same, no matter the outcome. Constant-sum games are also known as zero-sum games since they can be translated into games where the sum of each outcome is zero without affecting any strategy.

The definition of Nash equilibrium is a pair of strategies, one for each player, where neither player individually can change strategies to improve their outcome. Therefore, one potential way for Missile to devise a strategy is to find one where Laser’s chance of winning is the same regardless of the move that they make. Such a strategy is not necessarily going to be possible, but we can give it a try.

Let p be the probability that Missile will burn 0, and let q be the probability that Missile will burn 1. If Laser predicts 0, the probability of them winning is p. If Laser predicts 1, the probability of them winning is 5⁄6 q. If Laser cannot make a choice between these two options to improve their odds, then p = 5⁄6 q. Missile’s probabilities must add up to 1, so we also require p + q = 1.

We have a linear system of two equations and two unknowns, so we can try to solve it. The solution is p = 5⁄11 and q = 6⁄11. Missile burns no fuel with probability 5⁄11 and burns its one fuel with probability 6⁄11. This provides Missile a 6⁄11 chance of winning, regardless of which prediction Laser makes.

On the flip side, Laser’s Nash equilibrium can be computed by choosing a set of probabilities so that Missile’s outcome is the same regardless of whether they choose to burn fuel or not. This time, let p be the probability that Laser will predict 0, and let q be the probability that Laser will predict 1. If Missile burns 0, the probability of them winning is q. If Missile burns 1, the probability of them winning is p + 1⁄6 q. Again, if Missile cannot make a choice between these two options to improve their odds, then q = p + 1⁄6 q. Laser’s probabilities also must add up to 1, so we also require p + q = 1.

Rearranging q = p + 1⁄6 q, we get 5⁄6 q = p, which happens to be the exact same equation Missile had. Thus, their solutions are identical. Laser predicts no fuel burned with a probability of 5⁄11 and predicts one fuel burned with a probability of 6⁄11. This provides Laser a 5⁄11 chance of winning no matter whether Missile has chosen to burn their fuel or not. This chance is the complement to Missile’s 6⁄11 chance of winning, as it has to be.

| Predict 0 | Predict 1 | Predict 2 | |

|---|---|---|---|

| Burn 0 | 0, 1 | 1, 0 | 1, 0 |

| Burn 1 | 1, 0 | 1⁄6, 5⁄6 | 1, 0 |

| Burn 2 | 1, 0 | 1, 0 | 2⁄6, 4⁄6 |

If the game ends in Round 5 with Missile having 2 fuel left, we have the above payoff matrix. We can solve similar linear algebra problems on three variables to find strategies for each player so that the other player’s outcome is the same no matter which of their three choices they make. The solution has Missile burn 0 fuel with probability 10⁄37, burn 1 fuel with probability 12⁄37, and burn 2 fuel with probability 15⁄37, giving Missile a 27⁄37 chance of winning regardless of what Laser’s prediction is.

Laser makes the predictions with the same probability distribution, giving Laser a 10⁄37 chance of winning no matter how much fuel Missile chooses to burn. This sort of distribution is what we might expect: somewhat evenly distributed with a bias towards burning more fuel, which provides some evasion for Missile.

What I found surprising is how when Laser plays at their Nash equilibrium, they simply do not care how much fuel Missile has secretly chosen to burn. Their odds of winning are the same regardless of what Missile reveals. It is as if Laser is no longer playing against Missile at all. Missile’s choices no longer matter. This result is called the “indifference principle.” Later we will see that Missile’s choices sometimes can matter.

Missile feels the same when playing at their equilibrium. No matter what prediction Laser ultimately makes, Missile’s odds of winning have already been fixed by playing at the Nash equilibrium.

In theory, this is what playing poker at a Nash equilibrium should feel like. Based on the state of the board, you make a random selection of calls, folds, or raises according to some appropriate distribution, and your distribution has fixed your expected payout at that point, independent of the choices the other players are going to make. No need to stress over whether your bluff will be called or not.

Before moving on to analyzing Round 4, we can complete a chart of the probability of Missile winning when playing at their Nash equilibrium depending on how much fuel they have remaining.

| Remaining Fuel | Missile Win Probability | Laser Win Probability |

|---|---|---|

| 0 | 0% | 100% |

| 1 | 6⁄11 ≈ 54.5% | 5⁄11 ≈ 45.5% |

| 2 | 27⁄37 ≈ 73.0% | 10⁄37 ≈ 27.0% |

| 3 | 47⁄57 ≈ 82.5% | 10⁄57 ≈ 17.5% |

| 4 | 77⁄87 ≈ 88.5% | 10⁄87 ≈ 11.5% |

| 5 | 137⁄147 ≈ 93.2% | 10⁄147 ≈ 6.8% |

| 6+ | 100% | 0% |

If Missile starts Round 4 with 1 fuel remaining, they are in big trouble. They can only burn fuel in at most one of the two remaining rounds. Therefore, Laser can win by predicting 0 fuel burned in both Round 4 and Round 5. Laser is guaranteed to destroy the missile on one of those two rounds. Missile must start Round 4 with at least 2 fuel remaining if they are to have a chance of winning.

Since the game in each round depends only on the state of Missile’s remaining fuel and not on the specific choices of how that state came to be, we can simplify the analysis of Round 4’s payoff matrix by using each player’s probability of winning Round 5 as their scores in Round 4. Note that Missile does not have the option of burning all their fuel in Round 4, since starting Round 5 with 0 fuel is a guaranteed loss for them, and Laser knows it.

| Predict 0 | Predict 1 | |

|---|---|---|

| Burn 0 | 0, 1 | 27⁄37, 10⁄37 |

| Burn 1 | 6⁄11, 5⁄11 | 2⁄11, 9⁄11 |

To compute Missile’s strategy, we define p and q as before. This time Missile needs to solve the equations p + 5⁄11 q = 10⁄37 p + 9⁄11 q and p + q = 1. The solution has Missile burn 0 fuel with a probability of 148⁄445 and burn 1 fuel with a probability of 297⁄445, which is roughly a 1⁄3rd–2⁄3rd split. This provides Missile a chance of winning with a probability of 162⁄445, or about 36.4%.

Meanwhile, Laser needs to solve the equations 27⁄37 q = 6⁄11 p + 2⁄11 q and p + q = 1. The solution has Laser predict 0 fuel with probability 223⁄445 and predict 1 fuel with probability 222⁄445, which is nearly evenly split. This provides Laser a chance of winning of 283⁄445, or about 63.6%.

In Round 5, each player’s individual payoff matrix was symmetric, which led to Missile and Laser having identical strategies. In Round 4, the individual player’s payoff matrices are no longer symmetric, and Missile and Laser end up with different strategies. Laser picks a nearly 50–50 split because the differences of column scores, 5⁄11 − 1 vs. 9⁄11 − 10⁄37, are nearly equal in magnitude. Whereas Missile picks a 1⁄3rd–2⁄3rd split because the difference of row scores, 27⁄37 vs. 2⁄11 − 6⁄11, differs in magnitude by close to a factor of two.

We can proceed as before, using linear algebra to compute equilibrium strategies for Round 4 with various states of remaining fuel for the missile. However, we run into a problem when Missile has 4 fuel remaining.

| Predict 0 | Predict 1 | Predict 2 | Predict 3 | |

|---|---|---|---|---|

| Burn 0 | 0, 1 | 77⁄87, 10⁄87 | 77⁄87, 10⁄87 | 77⁄87, 10⁄87 |

| Burn 1 | 47⁄57, 10⁄57 | 47⁄171, 124⁄171 | 47⁄57, 10⁄57 | 47⁄57, 10⁄57 |

| Burn 2 | 27⁄37, 10⁄37 | 27⁄37, 10⁄37 | 27⁄74, 47⁄74 | 27⁄37, 10⁄37 |

| Burn 3 | 6⁄11, 5⁄11 | 6⁄11, 5⁄11 | 6⁄11, 5⁄11 | 4⁄11, 7⁄11 |

Let us try to solve for Laser’s equilibrium strategy, the probability distribution where Missile’s outcome is the same no matter what move they make. We let p, q, r, and s be the probabilities of predicting 0 through 3 fuel burned, respectively. In addition to having p + q + r + s = 1, we require

Apparently, predicting 3 fuel used is such a terrible move for Laser that our “optimal” solution wants us to predict it with a negative 19.5% probability! Unfortunately, Laser cannot actually select moves with negative probability. We have to add constrains to our acceptable solutions to ensure all probabilities are non-negative.

Adding linear constraints to our problem brings us into the realm of linear programming. Since we are entering this realm, we can take this opportunity to compute the minimax solution for each player. For Laser, the minimax solution is to compute a probability distribution that minimizes Missile’s score, i.e., their probability of winning, which we will denote by z, subject to the constraint that Missile will choose the move that maximizes their score for that distribution. This leads to the following system of linear constraints:

Using linear programming, we can optimize this system. The optimal solution is

Is this minimax strategy really an optimal strategy? Let us look at Missile’s minimax strategy. For Missile, we need to optimize the following system of linear constraints:

The optimal solution is

This pair of strategies is optimal because Laser wins at least 38.5% of the time by their strategy, and Missile wins at least 61.5% of the time by their strategy, which adds up to 100%. It turns out that for zero-sum games, the minimax, maximin, and Nash equilibrium strategy sets are all identical, and furthermore, these strategies form a convex set. Using a general-purpose Nash equilibrium solver will produce the same pair of optimal strategies.

Still, these strategies surprised me. Laser is not even aiming at Missile burning 3 fuel. Shouldn’t Missile avoid being hit by Laser entirely by choosing to burn 3 fuel? But Missile’s optimal strategy also says to avoid burning 3 units of fuel. Why?

Upon closer examination, we see that with Missile’s computed optimal strategy, they have a 61.5% chance of winning. If Missile were to burn 3 fuel, yes, they would avoid being hit by Laser in Round 4. However, they would begin Round 5 with only 1 remaining fuel. In that state they would only have a 54.5% chance of winning, worse odds than their optimal strategy that avoids burning 3 fuel.

Laser is not aiming at Missile burning 3 fuel because Laser would love for Missile to burn 3 fuel. Doing so would increase Laser’s odds of winning from 38.5% to 45.5%. We see that Laser’s strategy does not entirely rule out all consequences of Missile’s choices. It only makes it indifferent to Missile’s choices within the support of Missile’s optimal mixed set of moves. Technically an opponent’s choices can affect the outcome of the game; they can still make moves that benefit the other player.

Continuing with linear programming, we can fill out the table for the probability of winning for Round 4.

| Remaining Fuel | Missile Win Probability | Laser Win Probability |

|---|---|---|

| 1- | 0% | 100% |

| 2 | ≈ 36.4% | ≈ 63.6% |

| 3 | ≈ 50.5% | ≈ 49.5% |

| 4 | ≈ 61.5% | ≈ 38.5% |

| 5 | ≈ 69.9% | ≈ 30.1% |

| 6 | ≈ 76.4% | ≈ 23.6% |

| 7 | ≈ 81.6% | ≈ 18.4% |

Continuing this way, we can work backwards and compute probability tables for all the rounds until we reach round 1.

| Starting Fuel | Missile Win Probability | Laser Win Probability |

|---|---|---|

| 7 | 1005005076075⁄3110959445024 ≈ 32.3% | 2105954368949⁄3110959445024 ≈ 67.7% |

In conclusion, we found that playing optimally, Missile has an approximately 32.3% chance of winning, which is a little higher than the 25% estimate given by Ethan. I leave it as an exercise to determine the most fair amount of starting for Missile to start with.

We say that a system is reliable if it continues to function correctly when events outside the system affect it. Many factors can impact the reliability of Bazel builds, especially dependencies on external services. In this post, we’ll focus on what can go wrong when your build needs resources you don’t control and what you can do to reduce the risk of build failures.

Some build actions triggered by Bazel might be accessing resources

that are external to your organization.

For Bazel builds, this typically applies to build rules (to build your first-party code) or

repository rules (utilities and tools those rules might need).

When Bazel starts a build, it emits data about network requests,

and you need to make those external requests visible

so that you know what external resources your builds depend on.

You can access this information via the Build Event Protocol (BEP)

which can be written to disk or,

if you operate a remote cache service, your provider might have a BEP viewer.

You can also use the --experimental_repository_resolved_file flag

to produce resolved information about all Starlark repository rules that were executed.

Building a target that depends on a repository rule such as this:

http_archive(

name = "yq_cli",

build_file = "@//tools/yq:BUILD.bazel.gen",

sha256 = "7583d471d9bfe88e32005e9d287952382df0469135f691e044443f610d707f4d",

url = "https://github.com/mikefarah/yq/releases/download/v4.47.1/yq_linux_amd64.tar.gz",

)would result in the following build event (the snippet below is copied from the BEP output):

...

children {

fetch {

url: "https://github.com/mikefarah/yq/releases/download/v4.47.1/yq_linux_amd64.tar.gz"

}

}

...To get an idea of what kinds of artifacts a Bazel build for a reasonably large project might fetch, let’s build a few open-source projects — Envoy, Redpanda, and datadog-agent. These are some of the domains from which at least one resource was fetched when building all targets from these projects:

bcr.bazel.build cdn.azul.com dl.google.com

dl.grafana.com dl.min.io download.gnome.org

files.pythonhosted.org gcr.io github.com

go.dev mirror.bazel.build mirrors.kernel.org

raw.githubusercontent.com pkgconfig.freedesktop.org pypi.org

static.crates.io static.rust-lang.org s3.amazonaws.com

www.antlr.org www.colm.net www.lua.org

www.sqlite.org www.tcpdump.orgWhile most of your external dependencies are going to be declared in build metadata files

such as MODULE.bazel (or legacy WORKSPACE),

some network requests are going to be made by build targets

such as genrules (e.g., by calling curl) or toolchains (e.g., a pip call to the PyPI index).

We’ll see a worked example of this later in the post.

In general, it is advised to rely on MODULE.bazel or WORKSPACE mechanisms

for accessing external dependencies instead of doing so via build or test actions.

Bazel by design lacks support and features for downloads to take place within build actions,

and when attempting to interact with external systems this way,

you will be limited in how you can manage and account for those requests.

Therefore, when building, the complete list of accessed online resources — those that are accounted for by BEP and those that are not — might be much longer. After doing a full build, it might be helpful to audit the network requests made to discover what resources were fetched and a complete inventory of external hosts your build depends on.

Given these external dependencies, these are common problems that could happen to any of them:

This post focuses on strategies to either remove these external dependencies from the critical path, or make failures graceful and recoverable.

The remedies below are intentionally “stackable”: you can start with low-effort

safeguards (e.g., checksums and retries) and progress toward stronger guarantees

(e.g., mirrors and network blocking).

If you’re skimming, you can pick one external host that concerns you

(e.g., github.com or pypi.org) and follow the options

that would let you depend on it more reliably.

External resources may not only vanish or become inaccessible, but also change in place.

Any artifact you download (unless there’s a strong guarantee from a provider), might change its contents

such as when a provider does in-place updates of their releases

(or it could also be a malicious attempt to inject code).

To prevent this issue, SHA-256 digests must be coupled with any artifact you download from the Internet.

Even though when declaring dependencies on external resources

such as with http_archive, providing sha256 attribute is optional,

it is considered a security risk to omit specifying the SHA-256 for remote files to be fetched.

As the majority of build rules and open-source tools used by projects built with Bazel are hosted on GitHub, there are some special concerns that are worth mentioning.

A public GitHub repository might be moved, deleted, or become private (this happened in 2025 with rules_mypy).

If you do have to rely on external rulesets hosted on GitHub,

make sure they are hosted under the bazel-contrib organization

(or help get them migrated at some point) to avoid surprises.

Checksums of dynamically generated archives might change; this has caused Bazel outages before, in 2023. There was some confusion about whether the stability of archives is guaranteed or not. There might be some edge cases such as when a Git repository is renamed, and since Bazel builds rely on stability of archives (for reproducibility and caching among other reasons) it might be best to play it safe and only use releases instead of using source downloads.

It is possible that some of your dependencies need to be obtained from an online resource

that is known to be unstable.

What’s worse, you may not even be able to cache it (or host yourself):

for example, imagine needing to download a short-lived license file for a commercial product from the manufacturer’s server

when starting a build.

To make downloading this file (via a repository rule) more likely to succeed,

consider using the --experimental_repository_downloader_retries flag

to specify the maximum number of attempts to retry upon a download error.

This varies a lot between organizations and the programming languages concerned, but a common approach that is adopted by most organizations is to check in the source code that is used to build a binary, and not the binary itself.

Many engineers would be strongly opposed to checking in any binary,

as Version Control Systems (VCS) are designed and optimised for managing the source code.

However, it is known that some organizations choose to place binary libraries

that are external dependencies of their first-party code

under version control.

This has been seen occasionally in Java projects where .jar libraries

(that nowadays can be managed with Maven / Gradle) were checked in.

Today, this, arguably, might make sense only for legacy projects,

air-gapped or classified networks, and for vendored native libraries

that are hard to rebuild.

Unless you are able to provide top-notch automation for keeping your third-party dependencies checked in under version control up-to-date, patched, and compliant with any licensing constraints, it might be best to rely on a private artifact cache for hosting third-party dependencies.

As your organization grows, you will likely need to invest in a tool that would allow you to organize your resources such as external tools and third-party code packages into repositories. There are lots of commercial solutions on the market such as JFrog Artifactory, Sonatype Nexus, AWS CodeArtifact, and GitLab package registry to name a few.

With a repository manager, once you discover a dependency on an external artifact, you would upload it manually in your internal binary repository and update your build metadata accordingly:

# MODULE.bazel

http_archive(

name = "tool",

...

urls = [

"https://artifacts.company.com/artifactory/project/tools/tool-1.2.3.tar.gz",

"https://www.project.org/source/1.2.3/tool-1.2.3.tar.gz",

]

)URLs from the urls attribute are tried in order until one succeeds.

It is recommended to specify the local binary repository artifact first,

and if the hosted mirror happens to be down, your build would still succeed

provided that, in this case, project.org is up and running.

You could also let your binary repository manager be the only place where Bazel builds can fetch resources from

if you don’t want to depend on external artifacts in any way at all.

This can be achieved by providing a configuration file for the remote downloader

using the --downloader_config flag.

For example, a simple use case may be to block GitHub and instead rewrite fetches to go to an Artifactory instance. This can be done with the following downloader configuration:

rewrite github.com/([^/]+)/([^/]+)/releases/download/([^/]+)/(.*) artifacts.my-company.com/artifactory/github-releases-mirror/$1/$2/releases/download/$3/$4

# if you still have to rely on dynamically generated archives instead of releases

rewrite github.com/([^/]+)/([^/]+)/archive/(.+).(tar.gz|zip) artifacts.my-company.com/artifactory/github-releases-mirror/$1/$2/archive/$3.$4However, support for using Bazel’s downloader needs to be enabled in Bazel rulesets by their authors.

For instance, in rules_python, the pip extension

now supports pulling information from a PyPI compatible mirror

which means that the Bazel downloader can be used for downloading Python wheels.

Take a look at some downloader configurations used in other projects (e.g., 1, 2, 3) to explore how others set up access to external resources and learn the nuances of the configuration declaration syntax.

Additional control of network access can be achieved by blocking some network requests in CI agents using custom firewall rules or other tools of that nature. However, as mentioned earlier, Bazel’s downloader configuration can only rewrite or block requests that Bazel is aware of. This means that not all network traffic in a Bazel build is Bazel-managed traffic.

To illustrate this, let’s declare a dependency on the gawk binary.

When running gawk, its sources are going to be fetched from the GNU FTP server.

Let’s also add a genrule that will download an archive from the same FTP server:

# MODULE.bazel

bazel_dep(name = "gawk", version = "5.3.2")

# BUILD.bazel

genrule(

name = "diffutils",

outs = ["diffutils-3.12.tar.xz"],

cmd = """wget -O "$@" https://ftp.gnu.org/gnu/diffutils/diffutils-3.12.tar.xz""",

)We’ll configure Bazel to use a downloader configuration that blocks fetches from that FTP server:

# bazel_downloader.cfg

block ftp.gnu.org

# .bazelrc

common --downloader_config=bazel_downloader.cfgWhen attempting to run the gawk binary from the ruleset, an error is expectedly raised

since accessing the server is blocked:

$ bazel run @gawk

...

ERROR: java.io.IOException: Configured URL rewriter blocked all URLs:

[https://ftp.gnu.org/gnu/gawk/gawk-5.3.2.tar.xz]However, building a genrule still succeeds

because the downloader configuration does not apply here:

$ bazel build //src:diffutils

...

INFO: From Executing genrule //src:diffutils:

--2026-01-19 10:48:54-- https://ftp.gnu.org/gnu/diffutils/diffutils-3.12.tar.xz

Resolving ftp.gnu.org (ftp.gnu.org)... 209.51.188.20, 2001:470:142:3::b

Connecting to ftp.gnu.org (ftp.gnu.org)|209.51.188.20|:443... connected.

HTTP request sent, awaiting response... 200 OK

Saving to: 'bazel-out/k8-fastbuild/bin/src/diffutils-3.12.tar.xz'External network requests of this nature are hard to audit in a large codebase

since they won’t show up as structured fetch events in BEP output.

To mitigate this, prefer using repository rules and Bzlmod extensions

for any downloads instead of ad hoc shell commands.

Going a step further, you might want to consider forbidding direct calls to applications

that might make network requests (such as curl or wget) in genrule targets, unless explicitly approved.

Where unavoidable, configure targets to access internal repositories instead of public endpoints.

When triggering builds in a Bazel sandbox, they are run in a container (using Linux Namespaces)

to isolate the build actions from the host.

In addition to making your entire filesystem read-only (except for the sandbox directory),

you can also forbid actions access the network.

This is useful in some scenarios when you want to confirm that a build doesn’t make any network requests

such as when running unit tests or integration tests that are not supposed to make any network calls.

See Bazel tags requires-network and block-network

to learn how to control network access for individual build targets.

Keep in mind that cached results of build actions can still be fetched even when blocking the network in a sandbox. So if artifacts needed for a build were uploaded to the Bazel cache previously, you won’t know whether a particular build needs any network resources unless you run the build without cache access. Also, none of the sandbox flags affect any cache as it’s expected that these flags should not affect the output of hermetic actions and making them part of a cache key would worsen the effectiveness of the cache.

With the network disabled in a sandbox, the genrule target we declared earlier fails to build:

$ bazel build //src:diffutils --spawn_strategy=linux-sandbox --nosandbox_default_allow_network

...

ERROR: Executing genrule //src:diffutils failed: (Exit 4): bash failed: ...

Resolving ftp.gnu.org (ftp.gnu.org)... failed: Temporary failure in name resolution.

wget: unable to resolve host address 'ftp.gnu.org'

Target //src:diffutils failed to build

...Since Bazel 8.4, you can also use the --module_mirrors flag

to mirror the source archives.

To take advantage of this, add --module_mirrors=https://bcr.cloudflaremirrors.com in your .bazelrc file.

Keep in mind that this only applies to registry sources and not to other resources fetched by Bazel

(such as downloads happening in the repository rules context).

Note that for Bazel builds, the Bazel Central Registry (BCR) only stores metadata for a Bazel module; the actual artifacts are usually fetched from URLs that point to files hosted online (most often on GitHub).

BCR itself is a sort of external dependency for your builds, too.

Even though it’s hosted on production-grade infrastructure at Google, it can still be impacted by outages and operational mishaps.

The SSL certificate for mirror.bazel.build has expired, causing worldwide CI breakages, at least twice:

once in 2022 and again in 2025.

Refer to Postmortem for bazel.build SSL certificate expiry to learn more.

Configuring Bazel to use https://bcr.cloudflaremirrors.com as a mirror for modules from the BCR helps,

but the Cloudflare mirror doesn’t cover the registry itself.

So if you want to go the extra mile, you might also consider setting up your own BCR index registry

and point Bazel at that instead.

But if this is not feasible, write a playbook for incident response

around build outages caused by external dependencies, so teams don’t have to improvise under pressure.

If your repository manager supports it, you could let your builds download external resources, but every resource that is being fetched is saved into the cache as well. On subsequent builds, the resources are going to be fetched from the cache, if available. This would let you turn random external downloads into a controlled internal dependency without requiring you to pre-vendor everything up front.

If your CI agents are in the same network or cloud region (depending on your infrastructure setup), this could also speed up the builds by having downloads complete faster. Not relying on external resources makes your Bazel builds also a lot more secure as your CI agents will only download data from a trusted source.

If using an off-the-shelf solution, such as the popular JFrog Artifactory, is not possible,

there are some other options.

Bazel picks up proxy addresses from the HTTP_PROXY and HTTPS_PROXY environment variables

and uses these to download files over HTTP and HTTPS, respectively (if specified).

This means you might have success with caching proxy solutions such as Squid and Charles

or by combining Nginx and Varnish HTTP reverse proxies.

Routing requests through a proxy might also help to avoid rate limiting issues

since the external service will see fewer direct requests.

With this configuration, your downloader configuration file would look something like this:

# point all downloads at the mirror

rewrite (.*) {caching-service-url}/$1

# use the original location if the mirror is down

rewrite (.*) $1For a completely custom solution, take a look at the Bazel downloader mirror from Monogon which can be used to mirror Bazel dependencies to a cloud bucket storage such as S3 or GCS. Bazel’s remote asset API lets you use an existing remote cache (content-addressable storage: CAS) as a downloader cache as well. The cache provider service needs to support it, but many existing solutions, both commercial and open-source ones, are compatible.

The --experimental_remote_downloader flag

can be specified to provide a Remote Asset API endpoint URI to be used as a remote download proxy.

To get started, consider using bazel-remote, which has out-of-the-box support for this use case.

Make sure to provide the sha256 for the assets to fetch

so that they can be cached just like any other CAS object.

A remote caching service will automatically download the assets from the URL if they are found in the CAS and cache it thereafter.

Bazel 9 adds support for remote repository caches which make Bazel builds (at least those requiring previously cached assets) extra resilient to external access issues. During outages of external hosting services, those organizations that didn’t have a central repository manager where repository rules artifacts could be stored had to extract files from cache directories on local developer machines and save them to an accessible location within the internal network.

Now these artifacts will be saved into a remote cache similarly to build output results.

To confirm that your remote repository cache works as expected,

you can use the --repository_disable_download flag

after doing a clean build (which should succeed as it will reuse the remote cache entries uploaded in the previous build).

Finally, instead of waiting for the next GitHub outage, you can test your resilience by intentionally breaking access to certain external hosts. In a staging CI environment, temporarily block access to key external systems with firewall rules and verify that your mirrors and caches are used as expected, builds either still succeed, or fail fast with clear error messages, and your runbooks are correct and sufficient.

Bazel projects often depend on external services in subtle ways, and any instability or change in those services can break otherwise healthy builds. You can significantly improve build reliability by making all downloads explicit and verifiable, routing them through managed infrastructure, and tightening how and when network access is allowed. Resilient Bazel builds come from treating external dependencies as first‑class operational risks and turning unpredictable third‑party failures into controlled, recoverable events.

With heartfelt thanks to the many people who have already tried hs-bindgen and

given us feedback, we have steadily been working towards the first official

release (see Contributors for the full list). In case you missed

the announcement of the first alpha, hs-bindgen is a

tool for automatic construction of Haskell bindings for C libraries: just point

it at a C header and let it handle the rest. Because we have fixed some critical

bugs in this alpha release, but we’re not quite ready yet for the first full

official release, we have tagged a second alpha release. In the

remainder of this blog post we will briefly highlight the most important

changes; please refer to the CHANGELOG.md of

hs-bindgen and of

hs-bindgen-runtime for the full list of changes, as well as

for migration hints where we have introduced some minor backwards incompatible

changes.

The most important fixes for bugs in the generated code are:

peek and poke for bitfields was broken, which could

lead to segfaults.We have also resolved a number of panics during code generation, but those would not have resulted in incorrect generated code (merely in no code being generated at all).

Implicit fields arise when one struct (or union) is nested in another, without any field name or tag:

We now support such implicit fields; both the inner (anonymous) struct as well as the corresponding field of the outer struct will be named after the first field of the inner struct1:

For this particular case we could also have chosen to flatten the structure

and add y and z directly to Outer, but that does not work in all cases

(for example, when we have an anonymous struct inside a union), so instead

we opt for consistency and always generate an explicit type for the

inner struct.

Unnamed bit-field declarations, which are used to control padding, are now supported:

We used to distinguish between parse predicates (which files should

hs-bindgen parse at all?) and selection predicates (for which C

declarations should we generate Haskell declarations?). This was confusing,

and as we are getting better at skipping over declarations with unsupported

features (and that list is dwinding anyway), parse predicates are not that

useful anymore. Parse predicates therefore have been removed entirely; we

simply always parse everything (selection predicates are still very much an

important feature of course).

Some infrastructure for and around binding specifications has been improved. For example, we now distinguish between macros and non-macros of the same name, and our treatment of arrays has changed slightly. For example, given

we now generate

We do not use Ptr CChar, because T might have an existing binding in

another library (with an external binding specification), and we don’t know

what the type of the elements of T are (it could for example be some

newtype around CChar). Elem is a member of a new IsArray class, part of

the hs-bindgen-runtime.

Top-level anonymous enums are now supported. For example,

results in

(Normally an enum results in a newtype around the enum’s underlying type,

and the patterns are for that newtype instead.)

We now generate bindings for static global variables (such globals are sometimes used in headers that also contain static function bodies).

All definitions required by the generated code are now (re-)exported from

hs-bindgen-runtime, so that it becomes the only package dependency that

needs to be declared (no need for ghc-prim or primitive anymore).

This list is not complete; some other less common edge cases have also been implemented.

Although we are still working on some finishing touches before we can release

the first official version of hs-bindgen, it is already being put to good use

on various projects. There are only a handful of missing C

features left, all of which low priority edge cases (though

if you have a specific use case for any of these, do let us know!). So if you

are interested, please do try it out, and let us know if you find any problems.

There should be no major breaking changes between now and the first official

release.

This is the version that uses the

--omit-field-prefixes option, which generates code that relies on

DuplicateRecordFields and OverloadedRecordDot.↩︎

The GHC developers are very pleased to announce the release of GHC 9.12.4. Binary distributions, source distributions, and documentation are available at downloads.haskell.org and via GHCup.

GHC 9.12.4 is a bug-fix release fixing many issues of a variety of severities and scopes, including:

Fixed a critical code generation regression where sub-word division produced incorrect results (#26711, #26668), similar to the bug fixed in 9.12.2

Numerous fixes for register allocation bugs, preventing data corruption when spilling and reloading registers (#26411, #26526, #26537, #26542, #26550)

Fixes for several compiler crashes, including issues with CSE (#25468), and the simplifier(#26681), implicit parameters (#26451), and the type-class specialiser (#26682)

Fixed cast worker/wrapper incorrectly firing on INLINE functions (#26903)

Fixed LLVM backend miscompilation of bit manipulation operations (#20645, #26065, #26109)

Fixed associated type family and data family instance changes not triggering recompilation (#26183, #26705)

Fixed negative type literals causing the compiler to hang (#26861)

Improvements to determinism of compiler output (#26846, #26858)

Fixes for eventlog shutdown deadlocks (#26573) and lost wakeups in the RTS (#26324)

Fixed split sections support on Windows (#26696, #26494) and the LLVM backend (#26770)

Fixes for the bytecode compiler, PPC native code generator, and Wasm backend

The runtime linker now supports COMMON symbols (#6107)

Improved backtrace support: backtraces for error exceptions are now

evaluated at throw time

NamedDefaults now correctly requires the class to be standard or have an

in-scope default declaration, and handles poly-kinded classes (#25775, #25778, #25882)

… and many more

A full accounting of these fixes can be found in the release notes. As always, GHC’s release status, including planned future releases, can be found on the GHC Wiki status.

GHC development is sponsored by:

We would like to thank these sponsors and other anonymous contributors whose on-going financial and in-kind support has facilitated GHC maintenance and release management over the years. Finally, this release would not have been possible without the hundreds of open-source contributors whose work comprise this release.

As always, do give this release a try and open a ticket if you see anything amiss.

Athena and Ares argue over human nature, and agree to test three great minds of the age.

First, they approach Aristotle in the Lyceum and propose a bargain. “If you ask it of us, the one you love most in the world will perish, but you will be made rich beyond imagining.” Aristotle barely hesitates. “No,” he says. “To destroy the very purpose of living for the sake of the mere means is the mark of a man who lacks wisdom.”

Next, they approach Plato, finding him pacing in an olive grove of his Academy. They offer the same proposal. “I decline,” he says. “Love allows us to glimpse the ideal of pure beauty, but wealth is an anchor to the material world.”

Finally, they approach Socrates, wandering barefoot in the crowded dusty stalls of the Agora. The gods approach him with the same bargain: “If you ask it of us, Xanthippe, whom you love most in the world, will perish — ”

“I ask it!” he blurts out.

Athena blinks. “You did not even hear the rest. We were going to say you would be given wealth beyond measure.”

Socrates shrugs. “Keep it. This was never about money.”

Millenia later, Athena is still smarting from losing the bet, and she demands a rematch. Searching for another Greek philosopher, they instead find a middle aged woman writing a novel called Atlas Shrugged. She’s a philosopher, and Atlas was Greek, so that’s close enough.

“If you ask it,” Athena says to her, “we will make you wealthy beyond measure, but then in return, your true love will be taken from you.”

The woman looks up, bored, and asks “Why give me the money if you’re just going to take it right back?”

Peter is a professor at the University of Freiburg, and he was doing functional programming right when Haskell got started. So naturally we asked him about the early days of Haskell, and how from the start Peter pushed the envelope on what you could do with the type system and specifically with the type classes, from early web programming to program generation to session types. Come with us on a trip down memory lane!

This is the thirtieth edition of our Haskell ecosystem activities report, which describes the work Well-Typed are doing on GHC, Cabal, HLS and other parts of the core Haskell toolchain. The current edition covers roughly the months of December 2025 to February 2026.

You can find the previous editions collected under the haskell-ecosystem-report tag.

We offer Haskell Ecosystem Support Packages to provide commercial users with support from Well-Typed’s experts while investing in the Haskell community and its technical ecosystem including through the work described in this report. To find out more, read our announcement of these packages in partnership with the Haskell Foundation. We need funding to continue this essential maintenance work!

Many thanks to our Haskell Ecosystem Supporters: Standard Chartered, Channable and QBayLogic, as well as to our other clients who also contribute to making this work possible: Anduril, Juspay and Mercury; and to the HLS Open Collective for supporting HLS release management.

Matthew Pickering announced that he will be leaving the company and moving to a non-Haskell

role at the end of March.

Working with Matt has been a joy – more than his deep technical insight

or sharp intuition, it’s the warmth of his vision for how to work together and

his generosity that has made him such a force within the team.

He was also a beacon that could rally the community in difficult times, perhaps

most memorably with his technical and social contributions in consolidating

Haskell IDEs with the creation of the Haskell Language Server.

His dedication to tooling has also been an inspiration, with his work on

ghc-debug and on profiling an invaluable contribution to our understanding

of memory usage of Haskell programs.

The Haskell toolchain team at Well-Typed currently includes:

In addition, many others within Well-Typed contribute to GHC, Cabal, HLS and other open source Haskell libraries and tools. This report includes contributions from Alex Washburn, Duncan Coutts, Wen Kokke and Wolfgang Jeltsch in particular.

We are active participants in community efforts for developing the Haskell language and libraries. Rodrigo joined the GHC Steering Committee in December, alongside Adam Gundry. Wolfgang joined the Core Libraries Committee in February.

The Haskell Debugger (hdb) has been made more robust and more features were implemented by Rodrigo, Matthew, and Hannes.

Most notably, the debugger now:

-finfo-table-map for the latter)To run hdb you need to use GHC 9.14 and to configure the IDE accordingly. Please refer to the installation instructions. Apart from that, if HLS just works on your codebase, so should the debugger!

GHC’s eventlog already lets Haskell programs emit rich runtime telemetry, but

the workflow has historically been to run the program to completion and inspect

the eventlog afterwards. eventlog-live

allows us instead to monitor the program as it is running. Wen continued work on

this project, taking significant steps towards making it production-ready, including:

extending eventlog-live with support for the OpenTelemetry protocol

(#119),

bringing the underlying

eventlog-socket library

closer to being ready for general use, by

adding a testsuite (#27).

fixing a litany of issues with the C code

(#38), and

finalising the user-facing API (#43),

adding support for custom commands in

eventlog-socket

(#36).

The Language.Haskell.Syntax module hierarchy is intended to be a stable,

public API for the Haskell AST — one that external tools could eventually depend

on without coupling themselves to GHC internals, reducing ecosystem breakage.

Right now, that goal is undermined by lingering dependencies on internal modules

under the GHC hierarchy.

Alex, with help from Rodrigo, has been systematically removing these edges in the dependency graph:

Language.Haskell.Syntax.Type no longer depends GHC.Utils.Panic

(!15134, #26626).Language.Haskell.Syntax.Decls no longer depends on GHC.Unit.Module.Warnings

(!15146, #26636), nor on GHC.Types.ForeignCall (!15477, #26700) or

GHC.Types.Basic (!15265, #26699).Language.Haskell.Syntax.Binds no longer depends on GHC.Types.Basic

(!15187, #26670).Once this work is done, it will be possible to consider moving the AST into a separate package, and taking further steps towards increasing modularity of the compiler.

base packageHistorically, the base package was used as both the user-facing standard

library and a repository of GHC-specific internals, with much special treatment

in the compiler. This means GHC and base versions are tightly coupled, and

makes upgrading to new compiler versions unnecessarily difficult.

GHC developers have made significant progress towards making base a normal Haskell

package: ghc-internal has been split out as a separate library, base no

longer has a privileged unit-id in the compiler, and Cabal now allows

reinstalling it.

Matt posted a summary of progress and outlined possible next steps to seek community consensus on the direction of travel. The reinstallable-base repository collects documents and discussion on the effort.

Wolfgang continued various pieces of technical groundwork:

cleaning up many unused known-key names in the compiler (!15184, !15190, !15211, !15213, !15217, !15218, !15219, !15215),

finishing the process of removing GHC.Desugar from base (!15433),

refining the import list of System.IO.OS to aid in modularity (!15567).

Wolfgang improved the public API of base relating to OS handles, to make the

API more stable across platforms and avoid the need for users to depend on

GHC-internal implementation details (!14732, !14905). While in the area, he

fixed a bug in the implementation of hIsReadable and hIsWritable for duplex

handles (#26479, !15227), and a mistake in the documentation of hIsClosed

(!15228).

GHC bug #26416 has occupied the attention of the team for quite some time. Initially thought to be an issue with specialisation, a reproducer that Sam and Magnus created showed that the issue is in fact a bug in absence analysis — an optimisation that identifies and removes unused function arguments — in which GHC would erroneously conclude that a used argument was in fact absent.

Andreas helped investigate the root cause, before Zubin finally took the torch and put up a solution (!15238).

GHC’s changelogs have not always been as complete or reliable as the community deserves. Keeping changelogs accurate across backports has also been a major source of frustration for release managers.

This is why, after a discussion initiated by Teo Camarasu in #26002, we have decided

to adopt the changelog.d system —

already in use by the Cabal project — in which each change is a separate file

in the changelog directory.

This eliminates the merge conflicts that make backporting painful, and makes it

easier to associate MRs with changelog entries.

Zubin has been spearheading the effort, with the intention to switch to this new method of changelog generation right after the fork date for GHC 10.0.

Sam reviewed the implementation of the QualifiedStrings

extension by Brandon Chinn (!14975).

This allows string literals of the form ModName."foo"

(interpreted as ModName.fromString ("foo" :: String)).

Sam made several changes to the treatment of Coercible constraints in the

typechecker (!14100):

Coercible constraints are greatly

improved, an oft-requested improvement (#15850, #20289, #23731, #26137).

Error messages now consistently mention relevant out-of-scope data

constructors, provide import suggestions, and include additional

explanations about roles (when relevant).Magnus implemented several fixes to the implementation of ExplicitLevelImports:

Sam improved the reporting of “valid hole fits”, adding support for suggesting bidirectional pattern synonyms (#26339) and properly dealing with data constructors with linear arguments (#26338).

Sam investigated a typechecking regression starting in GHC 9.2 with the

introduction of the Assert type family to improve error messages involving

comparison of type-level literals (#26190), posting his analysis to the ticket.

To tackle this, he opened GHC proposal #735, which is still in need of further community feedback.

Sam minimised a bug with rewrite rules (#26682), which allowed Simon Peyton Jones to identify and fix the bug (!15208).

Sam improved how existential variables are displayed in Haddock documentation (!15099, #26252).

Typeable evidence (#26846, !15442).singletons library producing non-deterministic names

(singletons #629,

th-desugar #240).Sam finished up and landed a long-standing MR by Chris Wendt (!10133) which fixed a plugin-related issue.

Sam fixed a regression in ghc-typelits-natnormalise

in which the plugin would cause GHC to fall into an infinite loop

(ghc-typelits-natnormalise #116, #118).

Rodrigo announced that work described in #23218 evolved into the POPL 2026 paper “Lazy Linearity for a Core Functional Language”, which presents a way to type linearity in GHC Core that is robust to almost all GHC optimisations, together with a GHC plugin validating programs at each optimisation stage.

With the oversight of Andreas, Sam carefully reconsidered the treatment of register formats in the register allocator and liveness analysis. This culminated in !15121:

@aratamizuki on !15121.Sam put up a small fix for the mapping of registers to stack slots, fixing an oversight in the case that registers start off small and are subsequently written at larger widths (#26668, !15185).

Sam reviewed a GHC contribution by @sgillespie adding SIMD primops for

abs and sqrt operations (!15236), suggesting more efficient implementations of

certain operations.

Andreas investigated potential missed specialisations, which allowed Simon Peyton Jones to make further progress in improving the specialiser (#26831, !15441).

Sam investigated several bugs to do with the interactions of join points with

ticks (#14242, #26157, #26642, #26693) and casts (#14610, #21716, #26422).

He fixed the main bug (#26642, !15538), which was due to incorrect

transformations in mergeCaseAlts. He also undertook a general refactor of

the area and, pinning down the overall handling of casts and ticks under

join points in a Note.

Matt fixed a decoding failure for stg_dummy_ret by using INFO_TABLE_CONSTR

for its closure (#26745, !15303).

Duncan fixed long-standing inconsistencies in eventlog STOP_THREAD status

codes (#26867, !15522).

Andreas improved the documentation of the -K RTS flag in !15365 (#26354).

Matt and Hannes improved the reporting of backtraces when using error

(!15306, !15395, #26751). This involved opening two CLC proposals

(CLC #383,

CLC #387).

Hannes continued working on the implementation of stack annotations and stack decoding (#26218), including:

ghc-stack-profiler,

a profiler that relies on stack annotations instead of heavier profiling

mechanisms, with the eventlog-socket

library; andghc-stack-annotations

compatibility library for annotating the stack.Rodrigo removed an incorrect assertion that fired when decoding a BCO whose bitmap has no payload (#26640, !15136).

Zubin fixed a GHC 9.14.1 build issue due to missing .cabal files for

ghc-experimental and ghc-internal in the source tarball (#26738, !15391).

Andreas investigated the use of Cabal’s --semaphore feature to speed up GHC builds slightly (#26876, !15483).

There are some issues preventing us from enabling this unconditionally

(#26977, Cabal #11557).

Magnus ensured the user’s guide can be generated with old versions of Python to fix CI build failures on some older containers (!15127).

Magnus updated the Debian images used for CI (ci-images !183,

!178).

Sam finished up the work of Sven Tennie on testing floating point expressions

in the test-primops test

framework for GHC (test-primops !19).

This is preparatory work for improving the robustness of GHC’s handling of

floating point (#26919).

Andreas updated the nofib GHC benchmarking suite to fix issues that Sam ran

into when trying to use it, updating the CI in the process

(nofib !81, !82, !83).

Magnus worked on the infrastructure for the GitLab instance used for the GHC project, bringing up new runners for CI and switching to a new verification system to approve new users which makes it easier for new contributors to open issues.

Magnus and Andreas helped the Haskell infrastructure team address Gitlab outages on short notice in order to improve availability of the GHC Gitlab instance.

Andreas and Magnus organized temporary CI capabilities sponsored by WT during a temporary outage of one of GHC’s CI runners.

Sam added support for setting the logging handle via the library interface of Cabal,

a significant milestone in updating cabal-install to compile packages with

the Cabal library without invoking external processes (Cabal #11077).

Matt helped Matthías Páll Gissurarson to fix a bug in which cabal haddock was looking for

files in the wrong directory (Cabal #11475, #11476).

Matt fixed a bug with broken Haddocks locally due to non-expanded ${pkgroot}

variable (Cabal #11217,

#11218).

Matt fixed some issues with cabal repl silently failing

(Cabal #11107,

#11237).

In collaboration with Zubin and Andreas, Hannes investigated the root cause of

HLS issue #4674,

posting his analysis in this comment.

In short, the problem was that the hlint plugin was using an incompatible

version of ghc-lib-parser, and a version mismatch in this library was causing

segfaults due to changes to the GHC.Data.FastString implementation between

the versions.

Hannes disabled the hlint plugin on GHC 9.10 to work around this issue

(HLS PR #4767).

Hannes reviewed and assisted with HLS PR #4856

by @vidit-od. This PR makes HLS use the stored server-side diagnostics for

code actions, in order to make them more responsive. This fixes HLS issue #4805.

Hannes helped land long-running HLS PR #4445

by @soulomoon, which allows files to be loaded concurrently in batches in

order to improve responsiveness of HLS.

Zubin and Hannes worked together to update HLS to work with GHC 9.14 (HLS PR #4780).

Hannes worked on general maintenance of the HLS project:

Hannes and Zubin implemented some fixes to Windows CI (HLS PR #4800, HLS PR #4768).

Hannes merged the hls-module-name-plugin into hls-rename-plugin in

HLS PR #4847.

Hannes improved the robustness of the hls-call-hierarchy-plugin-tests in

HLS PR #4834

by using VirtualFileTree.

Hannes also worked on hie-bios:

hie-bios PR #496)hie-bios PR #495)OsPath (hie-bios PR #493)Rodrigo continued work on the new Haskell Debugger.

Matt and Rodrigo introduced a DSL for evaluation on the remote process, which allows the debuggee to be queried from a custom instance, making it possible to implement visualisations which rely on e.g. evaluatedness of a term (#139).

Matt improved support for exceptions: break-on-exception breakpoints now provide source locations (#165).

Rodrigo allowed call stacks to be inspected in the debugger (#158).

Hannes introduced support for stack decoding and viewing custom stack annotations (#172).

Rodrigo made the Haskell Debugger use the external interpreter (#170), which paves the way for multi-threaded debugging (see also #140). This change also allowed Rodrigo to implement Windows support (#184) with the help of Hannes.

Matt fixed a bug in the handling of data constructors with constraints (#175).

Hannes improved caching in the CI (#173).

ghc-debugMatt and Hannes fixed several issues with AP_STACK closures

(!79,

!80,

!86).

Hannes implemented asynchronous heap traversal in ghc-debug-brick,

making the interface more responsive

(!78).

Hannes added history navigation and search caching to the ghc-debug-brick

interface

(!83).

Hannes added a summary row to the string counting table view (!81), and fixed the search limit not being honoured during incremental searches (!76).

A couple of years back I was discussing the Rhind Mathematical Papyrus

(RMP). It includes a table expressing as a sum

$$\frac1{a_1}+\frac1{a_2}+\dots+\frac1{a_k} $$ fractions with

numerator 1 (“unit fractions”). I said:

Getting the table of good-quality representations of

is not trivial, and requires searching, number theory, and some trial and error. It's not at all clear that

.

Today I wondered: did Ahmes (the author) have the best possible

expansions for all the values, or were there some

improvements the Egyptians had missed?

It turns out, yes! Or rather, maybe!

In

On the Egyptian method of decomposing into unit fractions

the author, Abdulrahman A. Abdulaziz, points out that for

the Rhind Mathematical Papyrus gives the expansion

$$\frac2{95} = \frac1{60} + \frac1{380} + \frac1{570}$$

but so it could have been

written as $$\frac2{95} = \frac1{60}+\frac1{228}.$$

But wait, maybe that wasn't an error. The Egyptians, like everyone,

often had to multiply by 10. (In fact, the RMP itself, right after

its table, has a shorter table of expansions of

.) And

is trivially

multiplied by 10, whereas

isn't. There is some

indication that Ahmes preferred fractions with even denominators,

because they are easier to double, and the usual Egyptian method of

multiplication required repeated doubling. But the Egyptians also

sometimes decupled while multiplying, and the

expansion would have made both of those

easy.

The methods by which Ahmes chose the expansions of , and

the criteria by which he preferred one to another, are still unknown;

he doesn't explain them. So it's tough to say that any item was or

wasn't “best” from Ahmes' point of view.

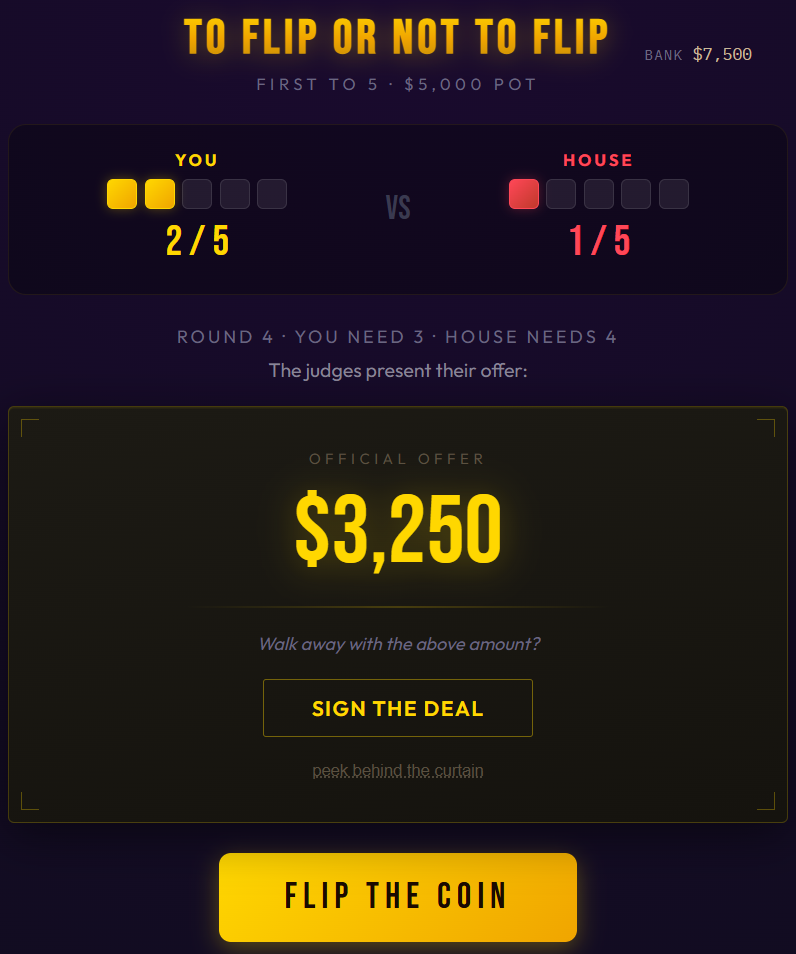

A fair coin, an unfair offer, and the price of certainty.

I sat down to work out a classic probability problem numerically, and accidentally built a casino.

In 1654, a gambler named Antoine Gombaud posed a question to Blaise Pascal: two players are in a race to win a certain number of points. The game is interrupted. How should they divide the pot?

Pascal wrote to Fermat, and their correspondence became one of the founding documents of probability theory. The answer is elegant: if you need a more points and your opponent needs b more, you can compute the fair split with a simple recurrence. Let P(a, b) be your probability of winning:

Every value in this table is a fraction with a power-of-2 denominator, and the numerators are just Pascal’s triangle. Beautiful math, clean solution, problem solved since the 17th century.

I built an interactive table to explore it. And then I thought: what if this were a game?

You and The House race to a target score. Each round, a fair coin is flipped — heads you score, tails The House scores. First to the target wins a pot of money.

But before each flip, judges look at the current game state, consult the probability table, and offer you cash to walk away. Accept, and you take the money. Decline, and the coin is flipped.

The question, every single round, is: to flip or not to flip?

You can play at willowdale.online/flip.

The judges know the exact fair value of your position — they have the same formula Pascal and Fermat computed. If you have a 37.5% chance of winning a $10,000 pot, your fair value is $3,750.

But they don’t offer fair value. They offer the nearest “clean” fraction of the pot that sits strictly below your true odds.

“Clean” means small denominators whose only prime factors are 2, 3, and 5 — fractions like 1/3, 3/8, 7/20, nothing with a denominator above 20. These produce dollar amounts that look like something a human came up with: $3,333, $3,750, $3,500. Not $3,077 or $3,846, which look like someone ran the numbers to the last penny.

So if your fair value is $3,770 (193/512 of the pot), the judges offer $3,750 (3/8). Barely below fair, and a beautifully round number. If your fair value is $1,875 (3/16), they offer $1,666 (1/6). An 89% offer — a real discount, but still a clean, human-sounding number.

This matters psychologically. Round numbers feel like ballpark estimates — casual, generous, not fully analyzed. Precise numbers feel calculated. When the judges offer $7,500, it sounds reasonable. If they offered $7,517, you’d immediately suspect they did the math and it’s in their favor. The irony is that $7,517 is a better deal for you — but I think you’d be less likely to take it. The round number keeps your guard down.

The algorithm is deterministic — same game state, same offer every time. Just math dressed up in a game show contract.

Since the offers are always strictly below fair value, the play that maximizes your expected winnings is to never accept a deal. The coin is fair, the game has zero house edge, and every offer leaves money on the table. A player who always flips would win 50% of their games and, on average, neither gain nor lose.

And yet.

When you’re ahead 4–3 in a race to 10, and the contract says $6,000, and you’ve already paid $5,000 to enter this game… you hesitate. That’s a guaranteed profit. The alternative is variance — maybe you win $10,000, but you are not that far ahead. Maybe your luck turns and you lose everything.

You know the offer is below fair. You can peek behind the curtain and see the exact numbers. The judges are shortchanging you by $128. But $128 feels like nothing when the alternative is watching your lead evaporate flip by flip.

So you sign. And $128 goes into the casino’s pocket.

This is what makes the game unusual. In blackjack or roulette, the house edge is baked into the rules — you can’t avoid it no matter how disciplined you are. Here, the game has no edge at all. The coin is fair. The race is symmetric. The only source of profit is human nature. Every dollar the casino makes is expected value that a player voluntarily left on the table.

Play for a while and you start to notice specific situations where the offer gets harder to refuse.

Managing risk. A guaranteed $7,500 is safer than a coin flip worth $7,734. In real life, you might need that money for rent. Variance has a real cost, and paying a premium for certainty can be entirely rational. There is a sophisticated argument for sometimes making decisions that reduce your expected value: bankroll management, survival probability and duration. Here the stakes are fictional, your bankroll buys nothing except more fair coin flips, and going broke is solved by refreshing your web browser, so that case is weaker — but it doesn’t feel weaker when your bankroll is shrinking and the judges are holding out real-looking money.

Mis-anchoring. The rational comparison is always between the offer and the expected value of continuing to flip. But that’s rarely the comparison your brain actually makes. If you were staring at a $0 offer last round and now the judges are offering $500, you’re comparing to the $0 — not to the $625 fair value. If your bankroll started at $10,000 and you’re down to $7,000, and the judges offer $3,200, you’re comparing to $10,000 — because taking the deal would put you above where you started. In both cases, the reference point that feels relevant has nothing to do with the expected value of this game.

Black and white thinking. When you’re behind in the race, the most likely single outcome is that you lose. If the judges offer $500 and your odds of winning are 6%, it feels like a choice between $500 and nothing. But expected value accounts for the 6% — the rare wins are big enough to compensate for all the losses across many games. You just don’t experience many games at once. You experience this one, where you’ll probably lose, and where the person who took $500 looks smart 94 times out of 100.

Imaginary momentum. You lose three flips in a row and it feels like the coin has turned against you — time to take the deal before things get worse. Or you win three in a row and feel like you’re on a streak that shouldn’t be interrupted. The coin has no memory. Each flip is independent. But the human brain is a pattern-recognition machine, and it will find narratives in random sequences whether they’re there or not.

The judges in this game are clever, but simple — they mechanically pick the nearest clean fraction below fair value, blind to everything except the current expected value.

But the optimal offer would be very different. The right objective isn’t just the EV gap (fair value minus offer). It’s: